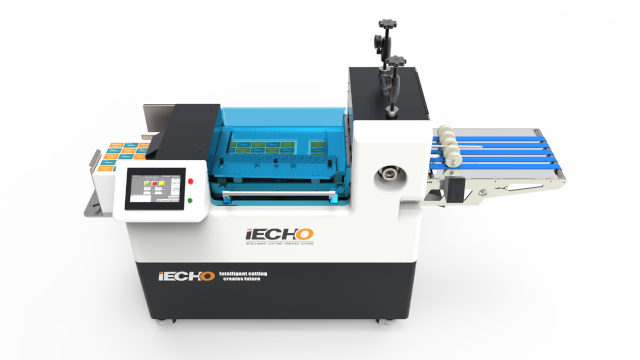

Druki firmowe przydatne w każdej firmie

DRUKI SAMOKOPIUJĄCE Bloczki samokopiujące, również znane jako druki samokopiujące, są zestawami papieru używanymi do wykonywania kopii dokumentów lub formularzy bez konieczności stosowania kserokopiarek czy drukarek. Składają się z kilku warstw papieru, zwykle trzech: oryginału (pierwszej kopii), kopii drugiej i ewentualnie trzeciej. Kiedy piszesz na oryginale (górnej warstwie), naciskając pióro lub ołówek, przenosisz odbite kopie na […]